1RB0RB_1LC1RE_1LF0LD_1RA1LD_1RC1RB_---1LC

1RB0RB_1LC1RE_1LF0LD_1RA1LD_1RC1RB_---1LC (bbch) is a probviously non-halting BB(6) Cryptid found by @mxdys on 18 Aug 2024. Andrew Ducharme forward simulated the combined map (P(x), Q(x,y)) for 10^8 iterations. The TM did not yet halt. After 10^7 iterations, the TM reached a value (x',1) where x' ~ 10^604100, and after 10^8 iterations, it reached a value x ~ 10^(6.04305 x 10^6).

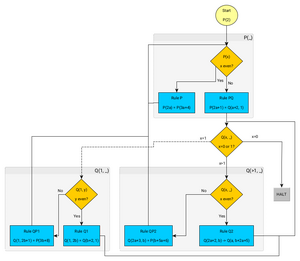

start: P(2) Rule P: P(2a) -> P(3a+4) Rule PQ: P(2a+1) -> Q(a+2,1) Rule QP1: Q(1,2b+1) -> P(3b+8) Rule Q1: Q(1,2b) -> Q(b+2,1) Rule QP2: Q(2a+3,b) -> P(b+5a+6) Rule Q2: Q(2a+2,b) -> Q(a,b+2a+5) Rule QH: Q(0,b) -> halt P(a) := 0^inf 1^a 011 <D 0^inf Q(a,b) := 0^inf 1^(2a+1) <D 0 1^b 0^inf

The rules have been proven in Lean.[1]

The TM will halt after N iterations of the map Q if it ever takes the form . This can only occur via P(2a+1) -> Q(a+2,1) or Q(1,2b) -> Q(b+2,1). The figure plots x for all cases (x,1) in the first 1000 iterations of R with a logarithmic scale along the vertical axis. The distance from any halting condition 2^M - 2 is growing exponentially with time, so it is only ever getting harder for this TM to halt.

On the trajectory starting from P(2), the Q(1,y) rules, at least through 10^5 steps, are never triggered. Thus, the implementation of the forward simulation can be made slightly faster (roughly 10% in practice) by, instead of nesting the execution of Q(x,y) within two if statements predicated on x=0 and x=1, only checking if x > 2, applying Q(x,y) if true, and throwing an exception if false.

Q(1, 0) has no predecessors in the map, it cannot be reached. Reachable Q-states always have b ≥ 1 (Stronger: b = 1 or b ≥ 5).

The backward chain to Q(0, b) is:

Q(0, b) ← Q(2, b') where b = b'+5 Q(2, b') ← Q(6, b'') where b' = b''+9 Q(6, ·) ← Q(14, ·) ← Q(30, ·) ← Q(62, ·) ← ...

General pattern: Q(2^(n+1) − 2, ·) chains down to Q(0, ·) through n halvings, all intermediate values being even.

The alternative path to Q(6, ·) is: Q(4, b) → Q(1, b+7) → Q((b+7)/2 + 2, 1) when b+7 is even (b odd), which gives Q(6, 1) when b = 1, i.e., Q(4, 1) → Q(1, 8) → Q(6, 1) → halt.

The P map

Since there is only one way the P phase can be left, it can be written as a function / map: where enters using Rule PQ. The recursive function is equivalent to:[2] Fixing the exponent, we get an infinite set of defining equations for , A natural question to ask, since needs as first argument to halt, is when is ? With the above definitions this leads to the infinite set of Diophantine equations: The start of a table of values where

n p(n) = 2^i-2 9 6 14 14 25 14 57 30 78 62 121 62 144 254 220 254 249 126 334 254 505 254 1017 510 1358 1022 2041 1022 4089 2046 5454 4094 8185 4094 14556 16382

The Q phase accumulator

Once the orbit enters the Q-phase at , rules Q2 and QP2 apply repeatedly (the first argument halves at each Q2 step, accumulating into the second argument) until the first argument becomes odd, at which point QP2 fires and returns to P. The net effect on the second argument is captured by a one-parameter accumulator, name it . It is recursively defined as The closed form with , again, the 2-adic valuation and the odd part of , shows can take any value: it is even less restricted than :

Probabilistic model for halting

The closed form for helps with analyzing the growth rate of Q-entry values across successive Q–P–Q cycles. The central result is that every cycle amplifies the Q-entry, with a minimum growth factor of 5/4, which comes from the dominant path: where both the Q-phase and P-phase take the aka odd shortcut. For the other parity combinations, the growth factor is strictly larger: gives growth ≈ 15/8 = 1.875, gives ≈ 45/16 ≈ 2.81, etc. There is no contraction in the Q–P–Q cycle. Since every Q–P–Q cycle grows, the Q-entry values diverge to infinity. We formalise this with a stochastic model that provides quantitative bounds on the probability of hitting specific targets.

We model the 2-adic valuation at each cycle as an independent Bernoulli trial: with probability 1/2 the cycle takes the dominant path (, growth factor 5/4), and with probability 1/2 it takes the path (growth factor ≥ 15/8). Formally, let with the product Bernoulli(1/2) measure P. Define the stochastic process If the machine reaches a Q-entry of order (with ) without halting, then the expected number of future near-misses of any target is at most[3] After 1 million rule steps this number shrinks to .

Is Q(1, _) reachable?

The other way to halt is the Q(1,_) path but, when simulating the first rule steps, the machine never reaches a Q(1,_) state. For that, the PQ rule would have to output Q(4,_), or Q2 would have to output Q(4,_). The latter happens when we have Q(x,_) with . So, ultimately there is a second system of Diophantine equations that could tell us for which input to P, PQ outputs a Q state that leads to Q(1,_).

References

- ↑ https://github.com/rwst/bbchallenge/blob/main/1RB0RB_1LC1RE_1LF0LD_1RA1LD_1RC1RB_---1LC/machine.lean

- ↑ https://github.com/rwst/bbchallenge/blob/main/1RB0RB_1LC1RE_1LF0LD_1RA1LD_1RC1RB_---1LC/Basics.lean

- ↑ https://github.com/rwst/bbchallenge/blob/main/1RB0RB_1LC1RE_1LF0LD_1RA1LD_1RC1RB_---1LC/basics.pdf